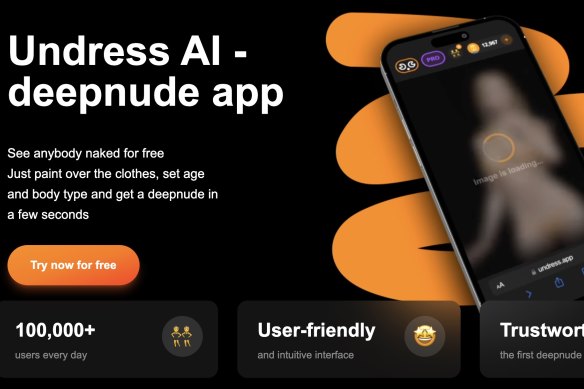

“See anybody nude for free,” the website’s tagline reads.

“Just paint over the clothes, set age and body type, and get a deepnude in a few seconds.”

More than 100,000 people use the “Undress AI” website every day, according to its parent company. Users upload a photo, choose from picture settings like “nude”, “BDSM” or “sex”, and from age options including “subtract five”, which uses AI to make the subject look five years younger.

The result is a “deepnude” image automatically generated for free in less than 30 seconds.

Undress AI is currently legal in Australia, as are dozens of others. But many do not have adequate controls preventing them from generating images of children.

More than 100,000 people use the “Undress AI” website every day, its parent company claims, including Australians.

There is evidence that paedophiles are using such apps to create and share child sexual abuse material, and the tools are also finding their way into schools, including Bacchus Marsh Grammar in Melbourne, where an arrest was made earlier this month.

The use of technology to create realistic fake pornographic images, including of children, is not new. Perpetrators have long been able to use image-editing software such as Photoshop to paste a child’s face onto a porn actor’s body.

What is new is that what used to take hours of manual labour, along with a desktop computer and some technical proficiency, can now be done in seconds thanks to the power and efficiency of AI.

These apps, readily available for Australian users through a quick Google search, make it easy for anyone to create a naked image of a child without their knowledge or consent. And they’re surging in popularity: web traffic analysis firm Similarweb has found that they receive more than 20 million visitors every month globally.

The “deepnude” apps like Undress AI are trained on real images and data scraped from across the internet.

The tools can be used for legitimate purposes – the fashion and entertainment industries can use them in place of human models, for example – but Australian regulators and educators are increasingly worried about their use in the wrong hands, particularly where children are involved.

Undress AI’s parent company did not respond to requests for an interview.

Schools and families – as well as governments and regulators – are all grappling with the dark underbelly of the new AI technologies.

Julie Inman Grant, Australia’s eSafety commissioner, is the person responsible for keeping all Australians, including children, safe from online harms.

ESafety Commissioner Julie Inman Grant during a Senate estimates hearing at Parliament House in Canberra.Credit: The Sydney Morning Herald

If Inman Grant gets her way, tools like Undress AI will be taken offline, or “deplatformed”, if they fail to adequately prevent the production of child pornography.

This month she launched new standards that, in part, will specifically deal with the websites that can be used to generate child sexual abuse material. They’re slated to come into effect in six months, after a 15-day disallowance period in parliament.

“The rapid acceleration and proliferation of these really powerful AI technologies is quite astounding. You don’t need thousands of images of the person or vast amounts of computing power … You can just harvest images from social media and tell the app an age and a body type, and it spits an image out in a few seconds,” Inman Grant said.

“I feel like this is just the tip of the iceberg, given how powerful these apps are and how accessible they are. And I don’t think any of us could have anticipated how quickly they have proliferated.

“There are literally thousands of these kinds of apps.”

Inman Grant said image-based abuse, including deepfake nudes generated by AI, were commonly reported to her office. She said about 85 per cent of intimate images and videos that are reported were successfully removed.

“All levels of government are taking this seriously, and there will be repercussions for the platforms, and for the people who generate this material.”

‘I almost threw up when I saw it’

Loading

The issue became a dark reality for students and their parents in June, when a teenager at Bacchus Marsh Grammar was arrested for creating nude images of around 50 of his classmates using an AI-powered tool, then circulating them via Instagram and Snapchat.

Emily, a parent of one of the students at the school, is a trauma therapist and told ABC Radio that she saw the photos when she picked up her 16-year-old daughter from a sleepover.

She had a bucket in the car for her daughter, who was “sick to her stomach” on the drive home.

“She was very upset, and she was throwing up. It was incredibly graphic,” Emily said.

“I mean, they are children … The photos were mutilated, and so graphic. I almost threw up when I saw it.

“Fifty girls is a lot. It is really disturbing.”

Bacchus Marsh Grammar hit the headlines over pornographic images but activist Melinda Tankard Reist says the problem is widespread.

According to Emily, the victims’ Instagram accounts were set to private, but that didn’t prevent the perpetrator from generating the nude images.

“There’s just that feeling of … will this happen again? It’s very traumatising. How can we reassure them that once measures are in place, it won’t happen again?”

A Victoria Police spokeswoman said that no charges had yet been laid, and an investigation is ongoing.

Activist Melinda Tankard Reist leads Collective Shout, the campaign group tackling exploitation of women and girls. She’s met with girls who have had their faces imposed onto explicit images.

Tankard Reist said girls in schools across the country were being traumatised as a result of boys “turning themselves into self-appointed porn producers”.

“We use the term deepfakes, but I think that disguises that it’s a real girl whose face has been lifted from her social media profiles and superimposed on to a naked body,” she said. “And you don’t have to go onto the dark web or some kind of secretive place, it’s all out there in the mainstream.

“I’m in schools all the time, all over the country, and certain schools have received the media focus – but this is happening everywhere.”

The Bacchus Marsh Grammar incident came after another Victorian student, from Melbourne’s Salesian College, was expelled after he used AI-powered software to make “deepnudes” of one of his female teachers.

A gap in the law

In Australia, the law is catching up to the issue.

Until now, specific AI deepfake porn laws existed only in Victoria, where the use of AI to generate and distribute sexualised deepfakes became illegal in 2022.

Attorney-General Mark Dreyfus has said new legislation will apply to sexual material depicting adults, with child abuse material already covered in the criminal code.Credit: Alex Ellinghausen

This month the federal government introduced legislation to ban the creation and sharing of deepfake pornography, which is currently being debated by the parliament. Offenders will face jail terms of up to six years for transmitting sexually explicit material without consent, and an additional year if they created the deepfake.

The legislation will apply to sexual material depicting adults, with child abuse material already dealt with by Australia’s criminal code, according to Attorney-General Mark Dreyfus. AI-generated imagery is already illegal if it depicts a person under the age of 18 in a sexualised manner, he said.

“Overwhelmingly it is women and girls who are the target of this offensive and degrading behaviour. And it is of growing concern, with new and emerging technologies making it easier for abuse like this to occur,” Dreyfus said.

“We brought this legislation to the parliament to respond to a gap in the law. Existing criminal offences do not adequately cover instances where adult deepfake sexual material is shared online without consent.”

The federal government has also brought forward an independent review of the Online Safety Act to ensure it’s fit for purpose.

Noelle Martin is a lawyer and researcher, and at the age of 18 was the target of sexual predators fabricating and sharing deepfake pornographic images of her without her consent.

Noelle Martin is a lawyer but was once a victim of deepfakes created without her consent.Credit: Tony McDonough

For Martin, the younger a victim-survivor, the worse the harm.

“The harm to victim-survivors of fabricated intimate material is just as severe as if the intimate material was real, and the consequences of both can be lethal,” she said.

“For teenage girls especially, experiencing this form of abuse can make it harder to navigate daily life, school, and enter the job market.”

This abuse “could deprive victims of reaching their full potential and potentially derail their hopes and dreams”, Martin said.

Martin wants all parties in the deepfake pipeline to be held accountable for facilitating abuse, including social media sites which advertise deepfake providers, Google and other search engines which direct traffic to them, and credit card providers who facilitate their financial transactions.

“Ultimately, laws are just one part of dealing with this problem,” she said. “We also need better education in schools to prevent these abuses, specialist support services for victims, and robust means to remove this material once it’s been distributed online.

“But countries, governments, law enforcement, regulators and digital platforms will need to co-operate and co-ordinate to tackle this problem. If they don’t, this problem is only going to get worse.”

The Business Briefing newsletter delivers major stories, exclusive coverage and expert opinion. Sign up to get it every weekday morning.

>>> Read full article>>>

Copyright for syndicated content belongs to the linked Source : The Age – https://www.theage.com.au/technology/inside-the-ai-deepnude-apps-infiltrating-australian-schools-20240627-p5jpaa.html?ref=rss&utm_medium=rss&utm_source=rss_technology