As the US moves toward criminalizing deepfakes—deceptive AI-generated audio, images, and videos that are increasingly hard to discern from authentic content online—tech companies have rushed to roll out tools to help everyone better detect AI content.

But efforts so far have been imperfect, and experts fear that social media platforms may not be ready to handle the ensuing AI chaos during major global elections in 2024—despite tech giants committing to making tools specifically to combat AI-fueled election disinformation. The best AI detection remains observant humans, who, by paying close attention to deepfakes, can pick up on flaws like AI-generated people with extra fingers or AI voices that speak without pausing for a breath.

Among the splashiest tools announced this week, OpenAI shared details today about a new AI image detection classifier that it claims can detect about 98 percent of AI outputs from its own sophisticated image generator, DALL-E 3. It also “currently flags approximately 5 to 10 percent of images generated by other AI models,” OpenAI’s blog said.

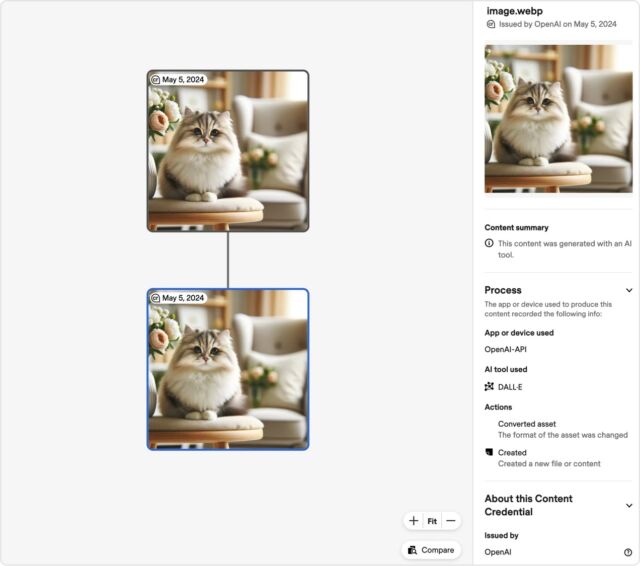

According to OpenAI, the classifier provides a binary “true/false” response “indicating the likelihood of the image being AI-generated by DALL·E 3.” A screenshot of the tool shows how it can also be used to display a straightforward content summary confirming that “this content was generated with an AI tool,” as well as includes fields ideally flagging the “app or device” and AI tool used.

To develop the tool, OpenAI spent months adding tamper-resistant metadata to “all images created and edited by DALL·E 3” that “can be used to prove the content comes” from “a particular source.” The detector reads this metadata to accurately flag DALL-E 3 images as fake.

That metadata follows “a widely used standard for digital content certification” set by the Coalition for Content Provenance and Authenticity (C2PA), often likened to a nutrition label. And reinforcing that standard has become “an important aspect” of OpenAI’s approach to AI detection beyond DALL-E 3, OpenAI said. When OpenAI broadly launches its video generator Sora, C2PA metadata will be integrated into that tool as well, OpenAI said.

Of course, this solution is not comprehensive because that metadata could always be removed, and “people can still create deceptive content without this information (or can remove it),” OpenAI said, “but they cannot easily fake or alter this information, making it an important resource to build trust.”

Because OpenAI is all in on C2PA, the AI leader announced today that it would join the C2PA steering committee to help drive broader adoption of the standard. OpenAI will also launch a $2 million fund with Microsoft to support broader “AI education and understanding,” seemingly partly in the hopes that the more people understand about the importance of AI detection, the less likely they will be to remove this metadata.

“As adoption of the standard increases, this information can accompany content through its lifecycle of sharing, modification, and reuse,” OpenAI said. “Over time, we believe this kind of metadata will be something people come to expect, filling a crucial gap in digital content authenticity practices.”

OpenAI joining the committee “marks a significant milestone for the C2PA and will help advance the coalition’s mission to increase transparency around digital media as AI-generated content becomes more prevalent,” C2PA said in a blog.

>>> Read full article>>>

Copyright for syndicated content belongs to the linked Source : Ars Technica – https://arstechnica.com/?p=2022700